Author: Eddy Lazzarin

Translator: Sissi

Introduction:

a16z has established an important position in the cryptocurrency field with its in-depth articles, providing us with the guidance needed for cognitive improvement and transformation. Recently, a16z has been focusing on topics beyond the token economy. First, there was a speech on “Token Design,” followed by the publication of the article “Tokenology: Beyond the Token Economy,” and now the highly anticipated “Protocol Design” course. As the main speaker of this course, Eddy Lazzarin, CTO of a16z crypto, repeatedly emphasized that the key to going beyond the token economy lies in protocol design, and token design is just an auxiliary means. In this course that focuses on protocol design, he shared valuable insights and inspirations for entrepreneurs for over an hour, helping them to deeply understand the critical role of protocol design in project success. This article is a concise version of the translation. For more exciting content, please see the full text link of the translation.

- Why is it said that developing ecology on Bitcoin is like looking for fish in a tree?

- Inventory of 10 emerging projects with powerful catalysts: Vela Exchange, Florence, Chronos…

- Hong Kong’s new regulations on virtual assets will take effect on June 1st, and individual investors may enter the market as early as the second half of the year.

The Inherent Laws of Protocol Evolution

Internet Protocols: The Link of Interaction

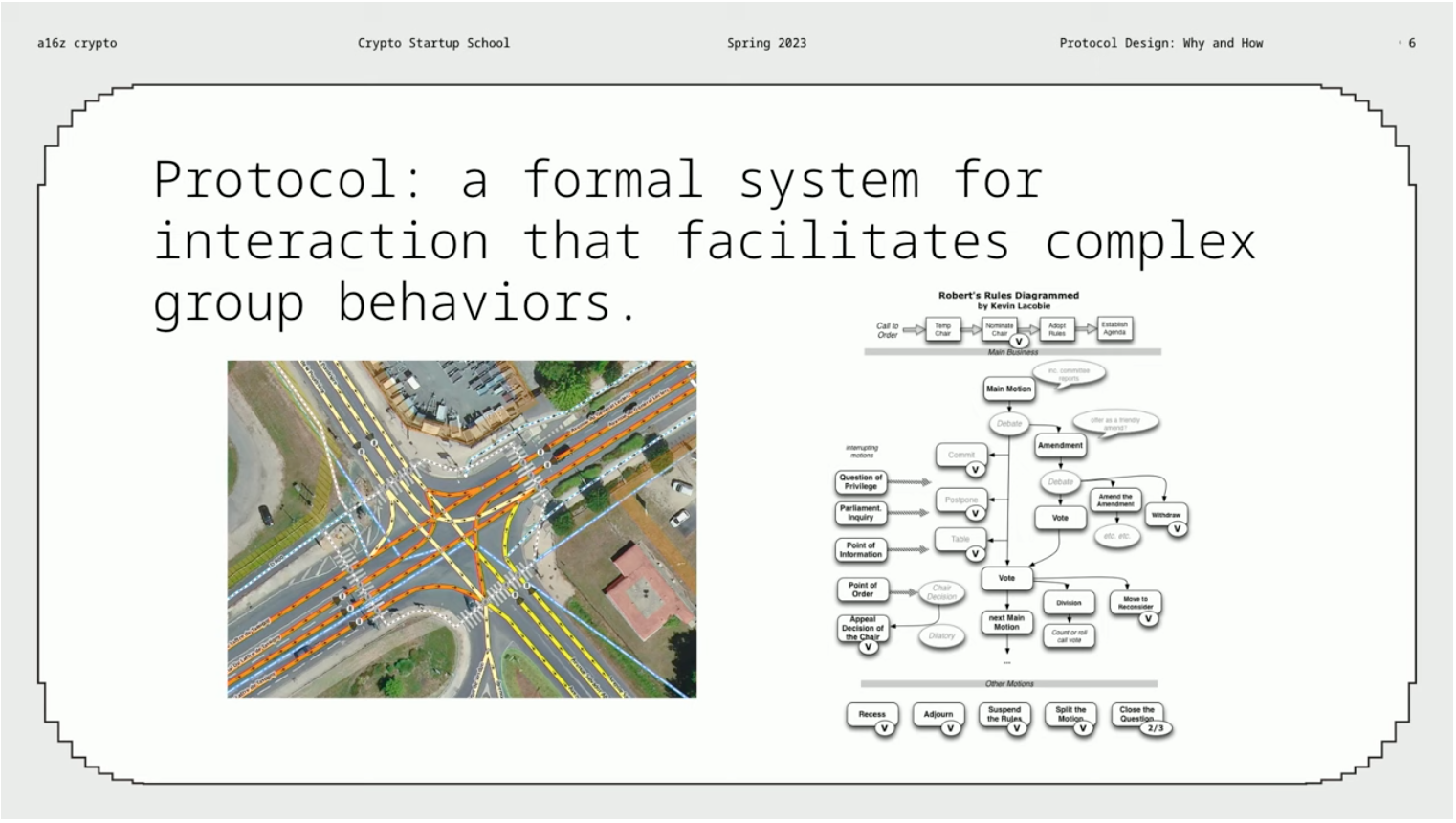

The internet is a protocol network, containing various types of protocols. Some protocols are straightforward, such as the state diagram of HTTP, while others are quite complex, such as the interaction diagram of the Maker protocol. The following figure shows various protocols, including internet protocols, physical protocols, and political protocols, among others. On the left of the figure, we see an interactive diagram of a street intersection, which is familiar and interesting to us.

The commonality of these protocols is that they are all formalized interaction systems that can promote complex group behavior, which is the core component of protocols. The strength of internet protocols lies not only in connecting interactions between people, but also in interacting with software. We know that software has high adaptability and efficiency, and can integrate various mechanisms. Therefore, internet protocols can be said to be one of our most important, or even the most important, types of protocols.

Protocol Evolution: Web1—Web2—Web3

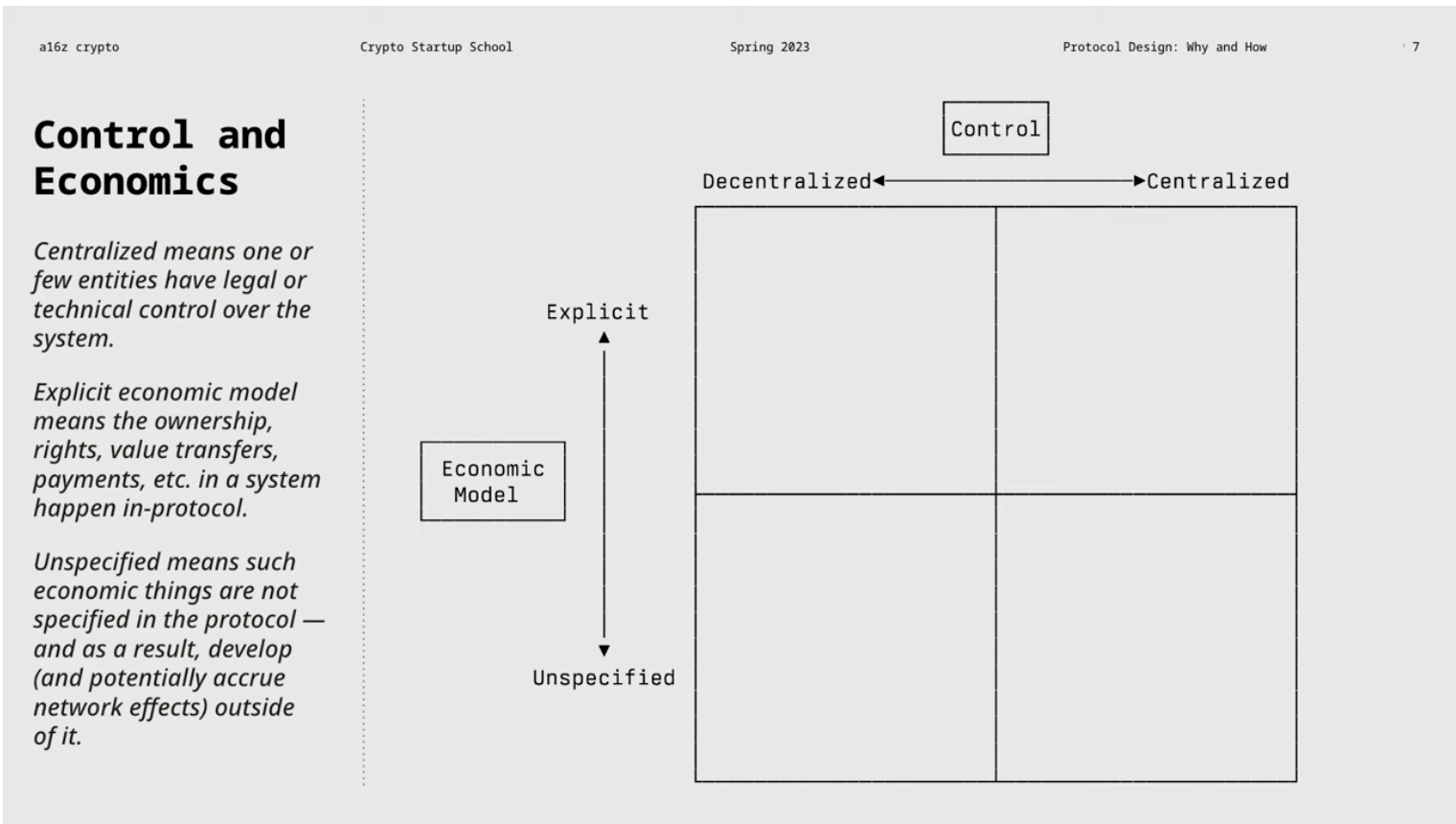

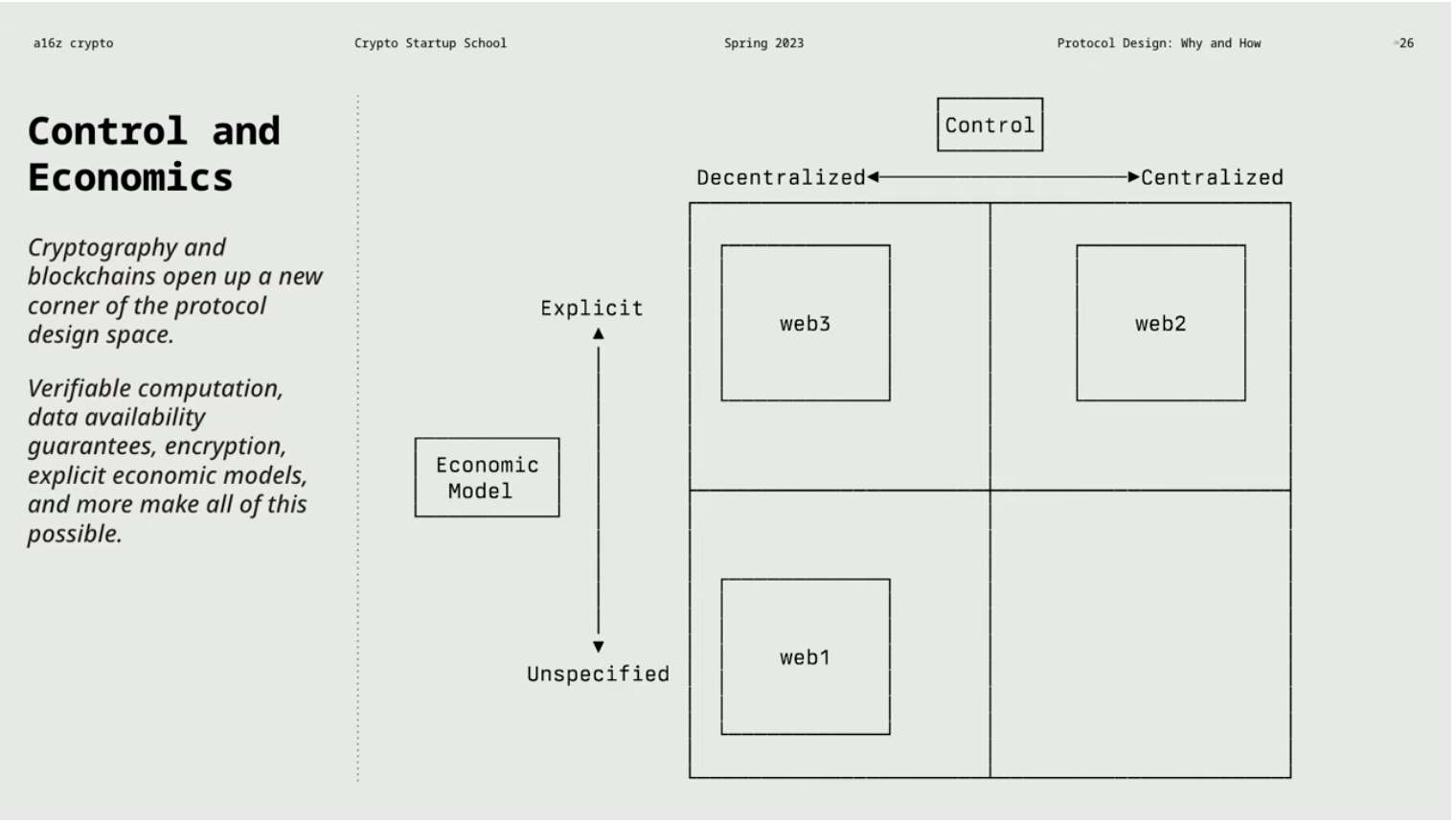

In the following chart, the horizontal axis represents the degree of decentralization or centralization of the protocol, or the degree of control over the protocol. On the vertical axis, there is the economic model of the protocol, which specifically refers to whether the economic model is explicit or implicit. This difference may seem subtle, but it has important implications.

Web1: Decentralized & No clear economic model

Protocols from the Web1 era (such as NNTP, IRC, SMTP, and RSS) remain neutral in terms of value flow, ownership, access rights, and payment mechanisms, with no clear economic model. Among them, Usenet is a protocol similar to today’s Reddit, used for exchanging posts and files. IRC is a widely used chat protocol in the early days, and SMTP and RSS are used for email and content subscription.

Usenet is an organized platform that allows users to post relevant content on specific categorized sub-servers. It is an important part of early Internet culture and exists outside of HTTP. Using Usenet requires a specific client and an Internet service provider (ISP) that supports Usenet. Usenet is distributed across a large and constantly changing network of news servers, which anyone can run, and posts are automatically forwarded to other servers, forming a decentralized system. Although users rarely pay directly for Usenet access, some people began to pay for commercial Usenet servers in the late 2000s. Overall, Usenet lacks a clear protocol economic model, and users must use it through their own trading methods.

These Web1 protocols are similar in architecture and stem from the same values. Even if we are not very familiar with the protocols, we can understand how they work, which shows the importance of Web1 protocols’ readability and clear templates. However, these protocols gradually faced failure or change over time. The reasons for failure can be attributed to two aspects: the lack of specific functions that cannot compete with Web2 competitors, and difficulties in obtaining funds. Ultimately, whether the protocol can adopt a decentralized manner and develop a sustainable economic model to integrate specific functions determines its success or failure. In summary, Web1 protocols can be classified as decentralized and lack a clear economic model type.

Network effects refer to the phenomenon of power accumulating as a system grows in scale and widespread use. Switching costs, on the other hand, refer to the economic, cognitive, or time costs required for a user to leave a current system and switch to another one. In the case of email, switching costs are critical for users of Gmail. If you use Gmail but don’t have your own domain, switching costs will be high. However, if you do have your own domain, you can freely switch email service providers and continue to receive emails with any provider. A company can increase switching costs through protocol design, forcing or encouraging users to use specific components, thereby reducing the likelihood of users turning to other suppliers.

Take Reddit as an example. It is a system that allows moderators to control sub-forums unilaterally, blurring the line between decentralization and centralization. Although allowing anyone to become a moderator may be seen as a form of decentralization, if the ultimate power still remains in the hands of the administrators (such as the Reddit team), they are still fully centralized systems. High-quality user experiences are not related to centralized power, but providing high-quality user experiences often requires financial support. In the Web1 era, decentralized protocols often failed to provide good user experiences due to a lack of funding. Financial support plays an important role in providing high-quality user experiences.

Web3: Decentralization & Clear Economic Model

On platforms such as Twitter, Facebook, Instagram, or TikTok in Web2, users are limited in their choices and subject to the platform’s interface decisions. However, how will the decentralized components introduced by Web3 change the protocol? The use of encryption and blockchain technology can reduce reliance on trust while clarifying economics principles and supporting decentralization. Web3 provides openness, interoperability, and open source characteristics, with a clear economic model and the ability to integrate funds into the protocol for sustainable development, avoiding the monopolization of all value.

As a developer, choosing to build on a system that is decentralized and has a clear economic model is the best choice. This can ensure the continued existence of the system and understand the economic relationships associated with it, without having to develop economic relationships outside the protocol. Stability and value capture need to be considered in different ways. Choosing to build on a decentralized system is critical because it can avoid potential risks and establish a project that is both persistent and has the potential to become the largest system.

Building on the internet is no longer seen as a crazy endeavor, as the internet itself is a completely decentralized system. Similarly, using open source programming languages and depending on web browsers has become a reliable foundation for building ambitious projects. Building on centralized systems can be limiting and prevent projects from scaling and expanding. Web3 is attracting talented developers who can build larger and more ambitious projects. Other systems or platforms may arise and compete with existing Web2 platforms, complying with regulations and possessing competitive advantages, and engaging in fierce competition with Web2 platforms.

The biggest problem with Web2 networks is their fragility and over-optimized business models. These networks pursue optimization of specific metrics, while ignoring things that are irrelevant to their goals, resulting in a lack of innovation and development of new products. Although they have strong network effects, they are not yet strong enough to form a monopoly, so once they encounter countermeasures against their weaknesses, they are easily endangered.

In contrast, Web3 provides more flexible and innovative space through decentralization and explicit economic models. Similar to a diverse rainforest ecosystem, the Web3 system establishes infrastructure and protocols that are suitable for the development of various interesting things, providing a more fertile soil for innovation. By utilizing cryptocurrency and token economic models, creativity and entrepreneurial spirit of participants can be rewarded, further promoting the development of the system.

Therefore, Web3 has better ecosystem sustainability and innovation potential, rather than relying solely on the accumulation of economic resources. The explicit economic model and decentralized characteristics allow Web3 to achieve innovation and development in the true sense, far away from the dilemma of over-optimization and concentration in a single area. By introducing encryption technology and token economic models, Web3 provides participants with greater space for creation and reward mechanisms, driving the system towards more valuable and sustainable development.

Web3 Protocol Design Case

Case Background and Design Goals

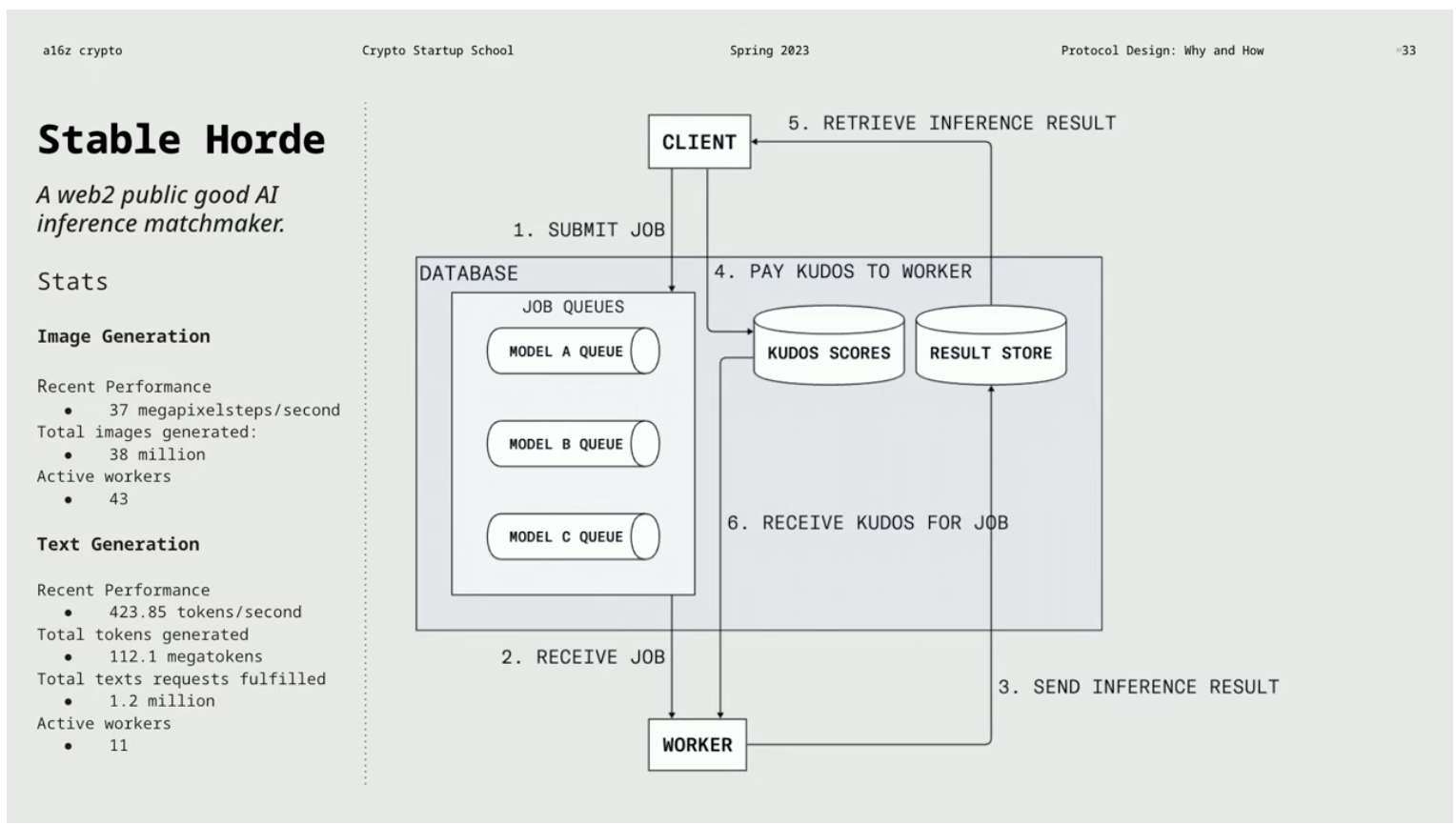

Starting with an interesting example, “Stable Horde” is a free system for generating images and a Web2 protocol. It uses collaborative layer functionality, allowing users to request others willing to help to generate images. The client submits tasks to the work queue, and the worker performs inference processing and sends the result to the result store. The client can retrieve the result and pay Kudos points to the worker. In Stable Horde, Kudos is a free point system used for prioritizing tasks. However, due to limitations on donated computing resources, the longer the queue, the longer it takes to generate the image.

We face an interesting question: How to expand this system to make it larger and more professional while maintaining openness and interoperability, and avoiding the risk of centralization that would undermine the original spirit of the project. One suggestion is to convert Kudos scores into ERC20 tokens and record them on the blockchain. However, simply adding blockchain might trigger a series of problems such as Sybil attacks.

Let’s rethink the protocol design process. You should always start with a clear goal, then consider constraints, and finally determine the mechanism. When designing a system, you need to measure goals and determine effective mechanisms. Constraints come in endogenous and exogenous forms, and by limiting the design space, mechanisms can be more clearly determined. Mechanisms are the substantive content of the protocol, such as liquidation, pricing, collateral, incentives, payments, and verification. The design should comply with the constraints and satisfy the clear goals.

Web3 Protocol Example: Unstable Confusion

Web3 Protocol Example: Unstable Confusion

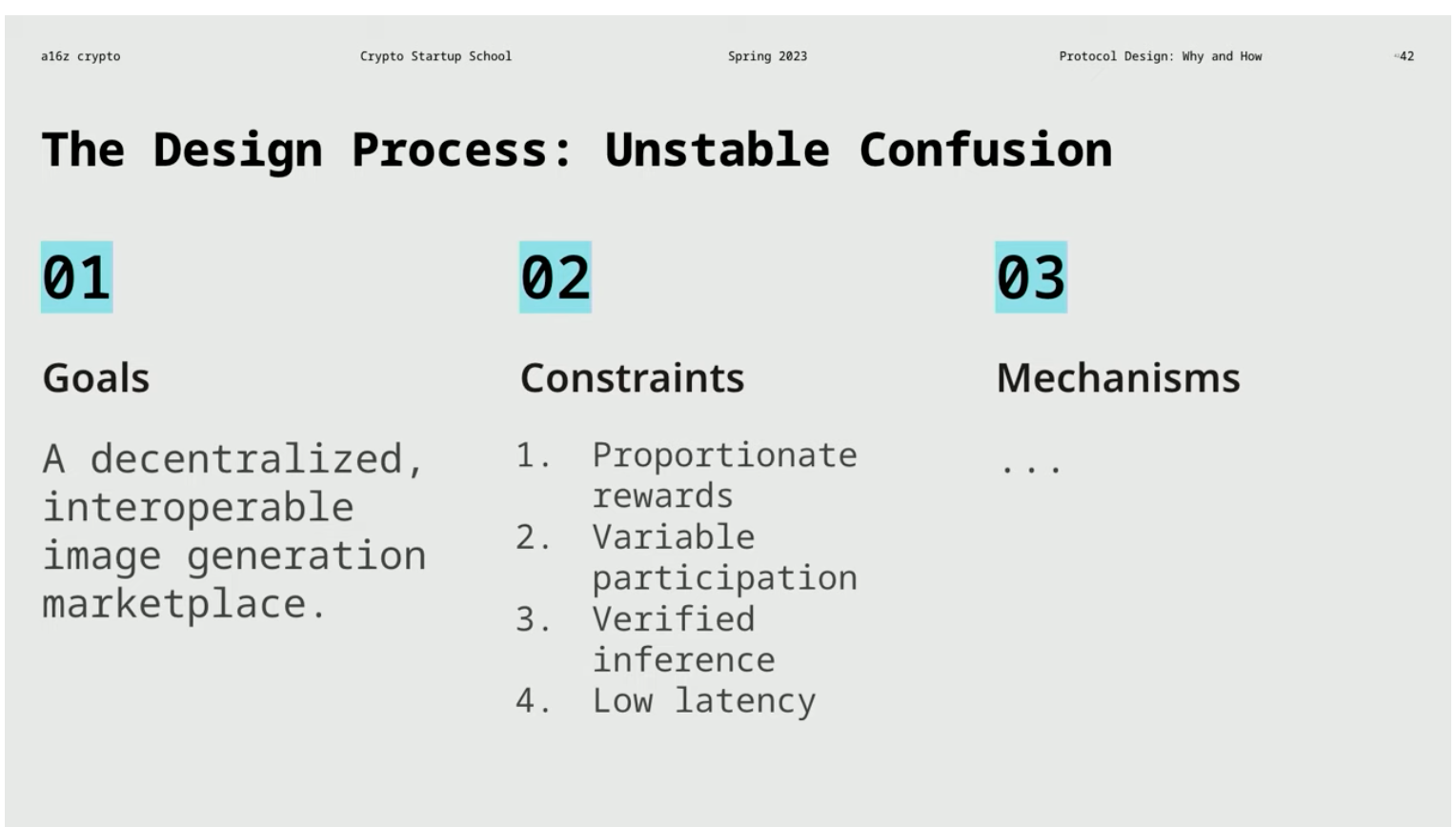

Let’s continue the discussion about a brand new Web3 protocol called “Unstable Confusion.” In the following paragraphs, we will outline some interesting directions proposed in the context of converting the existing Web2 protocol “Stable Horde” into the Web3 protocol “Unstable Confusion.”

As mentioned earlier, there is a problem of sending false results, so a mechanism is needed to ensure that users get the content they need, which is called “verification reasoning.” Simply put, we need to verify the reasoning to ensure that its results meet expectations. Another problem involves the workers in “Stable Horde.” Workers request the next task from the database in the order of the request and assign the task to the worker who made the earliest request. But in a money-involved system, workers may claim a large number of tasks to obtain more rewards, but they do not actually intend to complete these tasks. They may compete for low latency, grab tasks, and cause system congestion.

Some solutions have been proposed to address the above problems. The first is “paying by contribution ratio,” where workers receive corresponding rewards based on their contribution, competing for tasks in a way that benefits the network. The second is “flexible participation,” where workers can freely join or exit the system at a lower cost, attracting more participants. Finally, “low latency,” where the responsiveness and speed of the application are crucial to the user experience. Returning to our goal, which is to establish a decentralized, interoperable image generation market. Although we still have some key constraints, we can add, modify, or be more specific about the details later. Now, we can evaluate the feasibility of different mechanisms.

Potential Mechanism Design

1. Verification Mechanism

We can use game theory and cryptography to ensure the accuracy of reasoning. Game theory mechanisms can be used for dispute resolution systems, where users can upgrade disputes and specific roles arbitrate. Continuous or sampling audits are another method to ensure that tasks are assigned to different workers and record audited workers by reviewing the work of workers. Zero-knowledge proofs in cryptography can generate efficient proofs to verify the correctness of reasoning. Traditional methods include trusted third-party organizations and user ratings, but there are centralized risks and network effect problems.

Other possible verification mechanisms include having multiple workers perform the same task, and then users choose from the results. This may require higher costs, but if the cost is low enough, this approach may be considered.

2. Pricing Strategy

Regarding pricing strategies, an order book can be established on the chain. Calculated resource agents verified on the chain, such as gas, can also be used to measure. This method is different from a simple free market, where users only need to post the reasoning fee they are willing to pay, and workers can accept it, or they can bid for the task. Instead, users can create a proxy measure similar to gas, where a certain amount of computational resources is required for specific reasoning, and the number of computational resources directly determines the price. In this way, the operation of the entire mechanism can be simplified.

Additionally, an off-chain order book can be used, which has lower operating costs and may be very efficient. However, the problem is that the person who owns the order book may concentrate network effects on themselves.

3. Storage Mechanism

The storage mechanism is crucial to ensure that the results of the work can be correctly delivered to users, but it is difficult to reduce trust risks and prove whether the work is delivered correctly. Users may question whether the item has been delivered, similar to complaining about not receiving the expected goods. Auditors may need to verify the calculation process and check the accuracy of the output results. Therefore, the output results should be visible to the protocol and stored where the protocol can access them.

In terms of storage mechanisms, we have several choices. One is to store data on the chain, but this is expensive. Another option is to use a dedicated storage encrypted network, which is more complicated but can attempt to solve the problem in a peer-to-peer manner. Additionally, data can be stored off the chain, but this raises other issues because the person controlling the storage system may affect other aspects such as the verification process and final payment transmission.

4. Task Allocation Strategy

The method of task allocation also needs to be considered, which is a relatively complex field. Workers can be allowed to choose tasks after submitting them, or protocols can assign tasks after they are submitted. Users or end-users can also be allowed to choose specific workers. Each method has its own advantages and disadvantages, and the protocol can also consider the combination of which workers can request which tasks.

Task allocation involves many interesting details. For example, in a protocol-based system, it is necessary to know whether a worker is online and available before deciding whether to assign a task to it. It is also necessary to understand the ability and workload of each worker. Therefore, various additional factors need to be considered in the protocol, and the initial simple implementation may not include these factors.

Key points of decentralized protocol design

7 key design elements that may lead to centralization risks

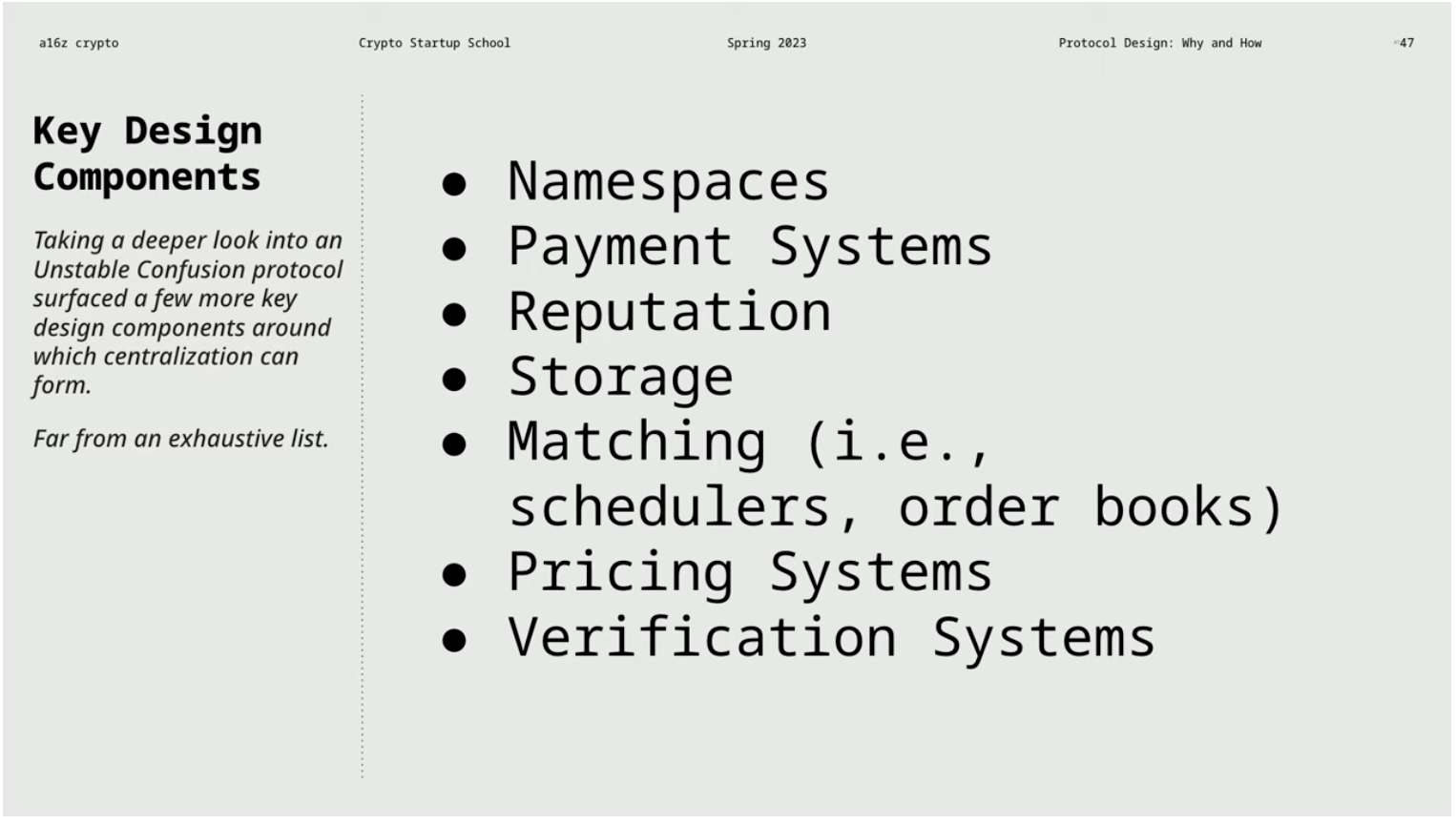

These include space naming introduced by email, payment systems, reputation, and storage, matching, pricing, and verification systems. These elements may become centralized due to network effects or high switching costs. To ensure the long-term health of the system, network effects can be reduced, network effects can be guided into the protocol, and a decentralized control layer can be established in the protocol to manage the protocol. Volatile tokens or other governance designs, such as reputation systems or rotating election mechanisms, can be used to achieve decentralized control.

Reducing switching costs and promoting interoperability

Reducing switching costs and promoting interoperability between different systems is important to encourage entrepreneurs to build applications on the system. Avoid introducing high switching costs and reduce over-reliance on off-chain order books or third-party verification systems.

Creating decentralized systems using Web3 technology

Use Web3 tools and principles to design systems, give entrepreneurs more power, and avoid over-centralization. Protocols that embrace the principles of Web3 usually have larger scale, longer life span, and more vibrant ecosystem vitality, providing a fertile innovation exploration area beyond the boundaries set by the largest existing companies.

Thoroughly research and choose the best solution

When designing protocols and determining strategies, it is necessary to conduct in-depth research on all aspects. For verification, cryptographic solutions are often the best choice. In terms of pricing, using a chain-based verification of computational resource proxies can adapt to various reasoning or machine learning tasks. In terms of task allocation, protocols that allow workers to fairly allocate tasks and choose whether to accept them can be used by real-time updating of worker capabilities and status. Storage issues can be addressed using solutions such as prototype sharding technology to solve problems in a short time window and adopting temporary storage.

Considering the above points when designing a decentralized system can help build a system with long-term robustness and decentralized characteristics.

Original article: Protocol design: Why and how

Full translation link: https://mp.weixin.qq.com/s/ms56Ax0tIKwX1nwIsHoa1A

Like what you're reading? Subscribe to our top stories.

We will continue to update Gambling Chain; if you have any questions or suggestions, please contact us!