Author: Figment Capital; Compilation: Block unicorn

Introduction:

Zero-knowledge (Zero-knowledge, referred to as ZK) technology is improving rapidly. With the advancement of technology, there will be more ZK applications, which will drive the demand for the generation of zero-knowledge proofs (ZKPs) to increase.

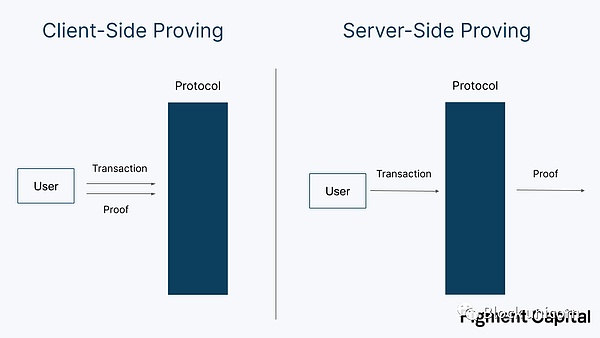

Currently, most ZK applications are protocols used for privacy protection. The proofs generated by privacy applications such as ZCash and TornadoCash are generated by users locally because generating ZKPs requires knowledge of secret inputs. These computations are relatively small and can be generated on consumer-grade hardware. We call the ZK proofs generated by users client proofs.

- Stablecoin Market Outlook: LUSD Leads the Way

- Data Analysis: The Supporting Forces Behind the Growth of NFT Market in 2023

- Has NFT project Azuki fallen from grace? Is the plagiarism incident once again mired in a crisis of trust?

Although some proof generation may be relatively lightweight, other proofs require more complex computations. For example, validity rollups (i.e., zkRollup) may require thousands of transactions to be proven in the ZK virtual machine (zkVM), which requires more computing resources and therefore longer proof times. Generating proofs for these large computations requires powerful machines. Fortunately, since these proofs depend only on the succinctness of zero-knowledge proofs rather than zero-knowledge (no secret input), proof generation can be safely outsourced to external parties. We call the outsourced proof generation (outsourcing the proof calculation work to cloud computing or other participants) server-side proofs.

Block unicorn note: The difference between zero-knowledge and zero-knowledge proofs. Zero-knowledge is a privacy-based technical framework that refers to the authenticity of events during communication, while not leaking any information, thus protecting privacy.

Zero-knowledge proof is a cryptographic tool used to prove the correctness of a certain assertion without revealing any additional information about the assertion. It is a technology based on mathematical algorithms and protocols, used to prove the truth of a certain assertion to others without exposing sensitive information. Zero-knowledge proof allows the proving party to provide the proof to the verifying party, and the verifying party can verify the correctness of the proof, but cannot obtain the specific information behind the proof.

In short, zero-knowledge is a general concept that refers to maintaining the confidentiality of information during interaction or proof, while zero-knowledge proof is a specific cryptographic technology used to implement interactive proof of zero-knowledge.

In the unicorn block note, the terms “prover” and “validator” have different meanings.

Prover: refers to the entity that performs specific proof generation tasks. They are responsible for generating zero-knowledge proofs to verify and prove specific computations or transactions. Provers may be computing nodes running on decentralized networks or specialized hardware devices.

Validator: refers to the nodes that participate in the consensus mechanism of the blockchain. They are responsible for verifying and validating the validity of transactions and blocks and participating in the consensus process. Validators typically need to stake a certain amount of tokens as security guarantees and receive rewards based on the proportion of their staked amount. Validators do not necessarily directly perform specific proof generation tasks, but they ensure the security and integrity of the network by participating in consensus.

Server-Side Proving

Server-Side Proving has been applied in many blockchain applications, including:

1. Scalability: Validity Rollup technologies such as StarkNet, zkSync, and Scroll extend the scalability of Ethereum by moving computations off-chain.

2. Cross-Chain Interoperability: Proof can be used to facilitate minimal-trust communication between different blockchains, enabling secure data and asset transfers. Teams include Polymer, Polyhedra, Herodotus, and Succinct.

3. Trustless Middleware: Middleware projects such as RiscZero and HyperOracle use zero-knowledge proofs to provide access to trustless off-chain computations and data.

4. Concise L1 (ZKP-Based Layer 1 Public Chain): Concise blockchains such as Mina and Repyh use recursive SNARK to enable users with weak computing power to independently verify state.

Although initially centralized, most applications that use server-side proof have a long-term goal of decentralizing the prover role. Like other components of the infrastructure stack, such as validators and sorters, effectively decentralizing the prover role will require careful protocol and incentive design.

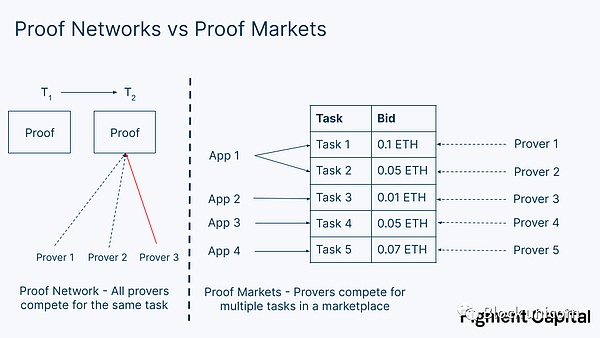

In this article, we explore the design of a proof-of-stake (PoS) network. We first differentiate between proof networks and proof markets. A proof network is a collection of validators that provide service to a single application, such as an Optimistic Rollup. A proof market is an open market where multiple applications can submit requests for verifiable computation. We then outline the current landscape of decentralized proof network models and share some preliminary scope on proof market design, which is an area that is still underdeveloped. Finally, we discuss the challenges of operating zero-knowledge (ZK) infrastructure and conclude that stakers and specialized ZK teams are better suited to meet the emerging demand for proof markets than PoW miners.

Proof Networks and Proof Markets

Zero-knowledge (ZK) applications require validators to generate their proofs. While currently centralized, most ZK applications will move towards decentralized proof generation. Validators do not need to be trusted to produce correct outputs, as proofs can be easily verified. However, there are several reasons why applications pursue decentralized proofs:

1. Liveness: Multiple validators ensure reliable protocol operation and no downtime in case some validators are temporarily unavailable.

2. Resistance to censorship: Having more validators increases resistance to censorship, as a small set of validators may refuse to prove certain types of transactions.

3. Competition: A larger set of validators can create market pressure on operators to create faster, cheaper proofs.

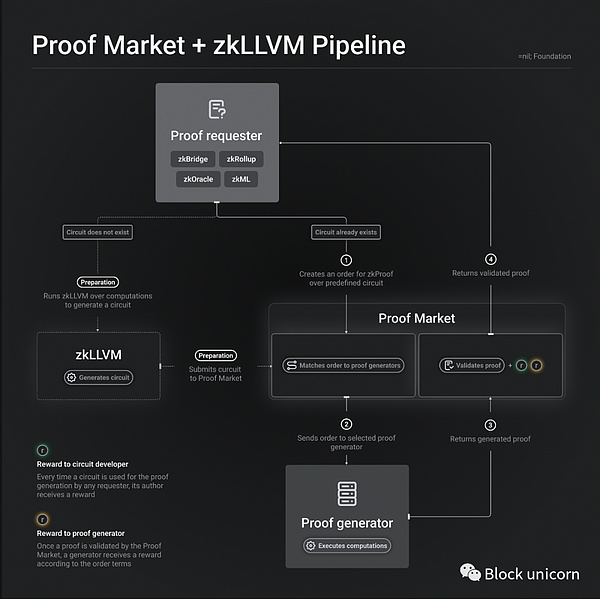

This puts applications in a design decision: should they launch their own proof network or outsource responsibility to a proof market? Outsourcing proof generation to proof markets such as nil; (a project name), RiscZero, and Marlin, which are under development, provides plug-and-play decentralized proofs and allows application developers to focus on other components of their stack. Indeed, these markets are a natural extension of modular argumentation. Like a shared sorter, proof markets are actually shared proof networks. By sharing validators between applications, they can also maximize hardware utilization; validators can be reused when an application does not need to generate proofs immediately.

However, proof markets also have some drawbacks. Internalizing the role of validators can increase the utility of the native token by allowing the protocol to utilize its own tokens for staking and validator incentives. This can also provide greater sovereignty for applications rather than creating an external point of failure.

One important difference between a proof-of-stake network and a proof-of-work network is that, in a proof-of-stake network, typically only one proof request needs to be satisfied by the set of validators at a time. For example, in an Validity Rollup, the network receives a series of transactions, computes a validity proof to prove they were executed correctly, and sends the proof to L1 (a layer-1 network), with a single validity proof being generated by a validator selected from a decentralized set.

Decentralized Proof Networks

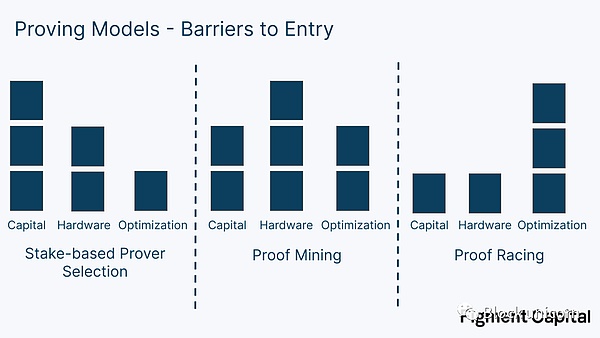

As the ZK protocol matures, many teams are gradually decentralizing their infrastructure to increase the liveliness and censorship resistance of the network. Introducing multiple validators into a protocol adds additional complexity to the network, particularly, the protocol must now decide which validator to assign to a given computation. There are currently three main approaches:

- Stakeholder-based selection: Validators stake assets to participate in the network. At each proof epoch, a validator is selected randomly, with their weight determined by the value of their staked tokens, and outputs are computed. When selected, the validator is compensated for generating the proof. Specific punishment conditions and leader selection may vary for each protocol. This model is similar to a PoS mechanism.

- Mining proof: The validator’s task is to repeatedly generate ZKPs until they generate a proof with a sufficiently rare hash value. Doing so gives them the right to prove in the next epoch and receive epoch rewards, and validators that can generate more ZKPs are more likely to win epochs. This type of proof is very similar to PoW mining – it requires a lot of energy and hardware resources; one key difference from traditional mining is that in PoW, hash computations are only a means to an end. In Bitcoin, being able to generate SHA-256 hash values serves no purpose other than to increase network security. However, in proof mining, the network incentivizes miners to speed up the generation of ZKPs, ultimately benefiting the network. Mining proofs were first pioneered by Aleo.

- Proof competition: In each epoch, validators compete to generate a proof as quickly as possible. The first person to generate a proof will receive epoch rewards. This approach is susceptible to the winner-takes-all dynamic. If a single operator can generate proofs faster than others, then they should win every epoch. This can be mitigated by distributing proof rewards to the first N operators who generate a valid proof, or introducing some randomness to reduce centralization. However, even in this scenario, the fastest operator can still run multiple machines to earn additional income.

Another technique is distributed proof. In this case, instead of a single scheme winning the right to prove during a given period, proof generation tasks are assigned to multiple participants who collaborate to generate a single output. One example is the Joint Proof Network, which divides proofs into many smaller statements that can be proven individually, and then recursively proves them into a single statement in a tree-like structure. Another example is zkBridge, which proposes a novel ZKP protocol called deVirgo that makes it easy to distribute proofs across multiple machines and has already been deployed by Polyhedra. Distributed proof is inherently easier to implement decentralization and can significantly increase the speed of proof generation. Each participant forms a computing cluster and participates in proof mining or competition. Rewards can be evenly distributed based on their contribution to the cluster, and distributed proof is compatible with any proof selection model.

Proof-of-stake-based proof selection, proof mining, and proof competition are balanced in three aspects: capital requirements, hardware accumulation requirements, and proof optimization.

The proof-of-stake-based proof selection model requires proofers to pledge capital, but has lower requirements for accelerating proof generation, as the selection of proofers is not based on their proof speed (although faster proofers may be easier to attract delegates). Proof mining is more balanced, requiring some capital to accumulate machines and pay energy costs to generate more proofs. It also encourages ZKP acceleration, just as Bitcoin mining encourages accelerated SHA-256 hashing. Proof competition requires the least capital and infrastructure, and operators can run a super-optimized machine to participate in the competition for each period. Although the lightest method, we believe that proof competition faces the highest centralization risk due to its winner-takes-all dynamics. Proof competition (like mining) also leads to redundant computation, but they provide better liveness guarantees because there is no need to worry about proofers missing the selected period.

Another benefit of the proof-of-stake model is that there is less pressure on proofers to compete on performance, leaving room for cooperation between operators. Cooperation typically involves knowledge sharing, such as spreading new technologies that accelerate proof generation, or guiding new operators on how to get started with proofing. In contrast, proof competition is more similar to MEV (maximizing Ethereum value) search, where entities are more secretive and adversarial to maintain competitive advantages.

Of these three factors, we believe that speed requirements will be the main variable affecting whether a network can disperse its set of validators. Capital and hardware resources will be sufficient, but the more fiercely validators compete on speed, the less decentralized the network’s proof will be. On the other hand, the more speed is incentivized, the better the network will perform, other things being equal. Although the specific impact may vary, the proof network faces the same trade-off between performance and decentralization as the first-layer blockchain.

Which proof model will win?

We expect that most proof networks will adopt a stake-based model, which provides the best balance between incentivizing performance and maintaining decentralization.

Decentralized proof may not be suitable for most effective aggregation of validity. The model where each validator proves a small fraction of transactions and then recursively aggregates them together faces network bandwidth limitations. The sequential ordering of the aggregated transactions also makes ordering more difficult — proof of earlier transactions must be included before proof of subsequent transactions. If a validator does not provide its proof, the final proof cannot be constructed.

Outside of Aleo and Ironfish, ZK mining will not be popular in ZK applications. It consumes energy and is unnecessary for most applications. Proof races are also unpopular as they lead to centralization effects. The more a protocol prioritizes performance relative to decentralization, the more attractive race-based models become. However, existing accessible ZK hardware and software acceleration has already provided significant speed improvements. We expect that adopting race-based models to increase proof generation speed for most applications will only bring small improvements to the network, and these improvements are not worth sacrificing the network’s decentralization for (proof races).

Designing proof markets

As more and more applications adopt zero-knowledge (ZK) technology, many are realizing that they would rather outsource their ZK infrastructure to proof markets rather than handle it internally. Unlike proof networks that serve only a single application, proof markets can serve multiple applications and meet their respective proof needs. These markets aim to be high-performance, decentralized, and flexible.

High Performance: The demand in the market proves to be diverse. For example, some proofs require more computation than others. Proofs that take longer to generate will need to be accelerated by dedicated hardware and other optimizations for fast proof generation services to be provided to applications and users willing to pay for them.

Decentralization: Like proof networks, proof markets and their applications want to be decentralized. Decentralized proofs increase vitality, censorship resistance, and market efficiency.

Flexibility: Other things being equal, proof markets want to be as flexible as possible to meet the needs of different applications. zkBridge, connected to Ethereum, might require a final proof, such as Groth16, to provide cheap on-chain proof verification. In contrast, the zkML (ML stands for machine learning) model might prefer the Nova-based proof scheme, which is optimized for recursive proofs. Flexibility can also be reflected in the integration process, where the market can offer a zkVM (zero-knowledge virtual machine) for verifying verifiable computations written in high-level languages such as Rust, providing developers with a simpler integration.

A proof market that is designed to be efficient, decentralized, and flexible enough to support a variety of zero-knowledge proof (ZKP) applications is a difficult and still unexplored research area. Solving this problem requires careful incentive and technical design. Below, we share some early considerations and trade-offs in proof market design:

-

Incentive and Punishment Mechanisms

-

Matching Mechanisms

-

Custom Circuits vs Zero-Knowledge Virtual Machine (zkVM)

-

Continuity vs Aggregation Proof

-

Hardware Heterogeneity

-

Operator Diversity

-

Discounts, Derivatives, and Order Types

-

Privacy

-

Progressive and Continuous Decentralization

Incentive and Punishment Mechanisms

Proofers must have incentive and punishment mechanisms to maintain market integrity and performance. The simplest way to introduce incentives is to use dynamic collateral and punishment. Proof operators can be incentivized by proof request bids, and even token inflation rewards.

You can require a minimum stake to join the network to prevent Sybil attacks. Validators who submit fraudulent proofs may be punished by forfeiting staked tokens. Punishment may also be proportional to the bid amount, with higher bids delayed (and thus economically more significant) resulting in greater punishment. If punishment is excessive (validators/proofers in proof-of-stake consensus can be punished for violating POS rules), a reputation system can be used instead.=nil; (this is a project name) currently uses a reputation-based system to hold proofers accountable, making it unlikely that dishonest or poorly performing proofers will be matched by the matching engine.

Matching mechanism

The matching mechanism is the problem of connecting supply and demand in the market. Designing a matching engine – defining the rules for how proofers and proof requests are matched – will be one of the most difficult and important tasks in the market, and the matching market can be completed through auctions or order books.

Auctions: Auctions involve proofers bidding on proof requests to determine which proofer wins the right to generate the proof. The challenge with auctions is that if the winning bid does not return proof, the auction must be re-conducted (you cannot immediately enlist the second-highest bidder to perform the proof).

Order book: The order book requires applications to submit bids for purchasing proofs to an open database; proofers must submit an asking price for selling proofs. If both requirements are met, the bid and ask prices can be matched: 1) the protocol’s bid calculation price is higher than the proofer’s asking price, and 2) the proofer’s delivery time is lower than the bid’s request time. In other words, the application submits a calculation to the order book and defines the maximum reward they are willing to pay as well as the longest time they are willing to wait to receive the proof. If a proofer submits an asking price and time below this requirement, they are eligible for matching. Order books are more suitable for low-latency use cases because bid prices on order books can be satisfied immediately.

The proof market is multi-dimensional; applications must request calculations within certain price and time ranges. Applications may have dynamic preferences for proof latency, with the price they are willing to pay for proof generation decreasing over time. Although order books are efficient, they fall short in reflecting the complexity of user preferences.

Other matching models can be borrowed from other decentralized markets, such as Filecoin’s decentralized storage market which uses off-chain negotiation, and Akash’s decentralized cloud computing market which uses reverse auctions. In Akash’s market, developers (known as “tenants”) submit computing tasks to the network, and cloud providers bid on the workload. The tenant can then choose which bid to accept. Reverse auctions work well for Akash because workload latency is not important, and the tenant can manually select the bid they want. In contrast, a proof market needs to run quickly and automatically, making reverse auctions a suboptimal matching system for proof generation.

The protocol may impose restrictions on the types of bids that some validators can accept. For example, a validator with a low reputation score may be prohibited from matching with large bids.

The protocol must prevent attack vectors caused by unlicensed validators. In some cases, validators can perform a proof delay attack: by delaying or failing to return a proof, validators can expose the protocol or its users to certain economic attacks. If the attack profit is large, token penalties or reputation fines may not prevent malicious validators. In the case of proof delay, rotating proof generation rights to new validators can minimize downtime.

Custom Circuits vs. Zero-Knowledge Virtual Machine (zkVM)

A proof market can provide custom circuits for each application, or it can provide a general-purpose zero-knowledge virtual machine. Custom circuits have higher integration and financial costs but can bring better performance to applications. Proof markets, applications, or third-party developers can all build custom circuits, and as a service offering, they can earn a portion of network revenue, as in the case of=nil;.

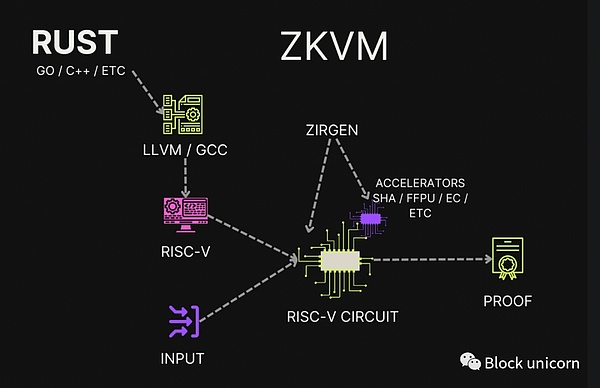

Although slower, zero-knowledge virtual machines (zkVMs) based on STARKs, such as RiscZero, allow application developers to write verifiable programs in high-level languages such as Rust or C++. zkVMs can support accelerators for common operations unfriendly to zero-knowledge, such as hash and elliptic curve addition, to improve performance. While a proof market with custom circuits may require a separate order book, leading to validator fragmentation and specialization, zkVMs can use a single order book to facilitate and prioritize computation on the zkVM.

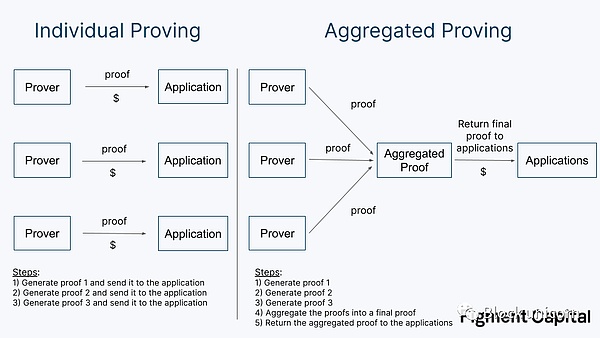

Single Proof vs. Aggregated Proof

Once proofs are generated, they must be fed back to the application. For on-chain applications, this requires expensive on-chain verification. Proof markets can either feed single proofs back to developers or use aggregated proofs to convert multiple proofs into one before returning it, sharing the gas cost between them.

Aggregated proofs introduce additional latency, requiring the proofs to be aggregated before returning, which takes additional computation and requires multiple proofs to complete before aggregation can occur, which may delay the aggregation process.

Proof markets must decide how to balance latency and cost. Proofs can either be quickly returned temporarily at a higher cost, or aggregated at a lower cost. We expect proof markets to require aggregated proofs, but as the scale increases, the time taken to aggregate them may be shortened.

Hardware Heterogeneity

The proof process for large computations is slow. So what happens when an application wants to generate computationally-intensive proofs quickly? Provers can use more powerful hardware, such as FPGAs and ASICs, to accelerate proof generation. While this is great for performance, specialized hardware may limit the set of possible operators, hindering decentralization. The proof market needs to decide on the hardware that its operators will run.

Block unicorn note: FPGAs (Field-Programmable Gate Arrays) are a type of specialized computing hardware that can be reprogrammed to perform specific digital computing tasks. This makes them particularly useful in applications where specific types of computation (such as encryption or image processing) are required.

ASICs (Application-Specific Integrated Circuits) are a type of hardware that are designed to perform a specific task, and are highly efficient at performing that task. For example, Bitcoin mining ASICs are designed specifically for performing the hashing computations required for Bitcoin mining. ASICs are typically highly efficient, but their cost is that they are not as flexible as FPGAs, since they can only be used for the task they were designed to do.

Another issue is homogeneity of provers: proof markets must decide whether all provers will use the same hardware, or if different setups will be supported. If all provers compete on a level playing field using readily available hardware, the market may be easier to maintain in a decentralized manner. Given the novelty of zero-knowledge hardware and the need for market performance, we expect proof markets to maintain agnosticism towards hardware, allowing operators to run whatever infrastructure they want. However, more work needs to be done on the impact of prover hardware diversity on prover centralization.

Operator Diversity

Developers must define the requirements for operators to enter and remain active market participants, which will affect operator diversity, including their size and geographic distribution. Some protocol-level considerations include:

Do validators need to be whitelisted or have no permit requirements? Will there be a cap on the number of validators who can participate? Do validators need to stake tokens to enter the network? Are there any minimum hardware or performance requirements? Will operators be limited in the market share they can hold? If so, how will this limitation be enforced?

The market for institutional-level operators may have different market entry requirements than the market for retail participation. The proof market should define what a healthy operator mix looks like and work backwards from there.

Discounts, Derivatives, and Order Types

Proof market prices may fluctuate during periods of high or low demand. Price fluctuations lead to uncertainty, and applications need to predict future proof market prices to pass these costs on to end users—an application doesn’t want to charge users a $0.01 transaction fee only to find that proofing the transaction costs $0.10. This is similar to the problem faced by layer twos, which must pass on the cost of future calldata (contained data, Ethereum Gas billing, which will be determined by data size) prices to users. Some have proposed that layer twos can solve this problem by using block space futures: layer twos can pre-purchase block space at a fixed price while also providing users with a more stable price.

The same demand exists in proof markets. Protocols like validity rollups may generate proofs at a fixed frequency. If a rollup needs to generate a proof every hour within a year, can it submit this bid all at once instead of needing to submit a new bid on short notice, which may be vulnerable to price increases? Ideally, they can pre-order proofing capacity. If so, should proof futures be provided within the protocol, or should other protocols or centralized providers be allowed to create services on top of it?

What about discounts for large or predictable orders? If a protocol brings significant demand to the market, should it receive a discount or must it pay the public market price?

Privacy

The market can provide private computation despite challenges in privately outsourcing proof generation. Applications require a secure channel to send private input to untrusted verifiers. Once received, verifiers need a secure computing sandbox to generate proofs without leaking private input; secure enclaves are a promising direction. In fact, Marlin has already conducted experiments with private computation using secure enclaves (Secure Enclave is a hardware technology that provides an isolated computational environment for sensitive data) on Nvidia’s A100 GPUs on Azure.

Progressive and Persistent Decentralization

The proof market needs to find the best way to progress towards decentralization, how should the first batch of third-party verifiers enter the market? What are the specific steps to achieve decentralization?

Related issues also include maintaining decentralization. One challenge facing the proof market is malicious bidding by verifiers. A well-funded verifier may choose to operate at a loss, offering below-market quotes, to squeeze other operators out of the market, then scale up and raise prices. Another form of malicious bidding is operating too many nodes while bidding at market prices, so that random selection gives this operator a disproportionate amount of proof requests.

Summary

Other decision factors include how to submit bids and whether proof generation can be distributed among multiple verifiers. Overall, the proof market has a huge design space and must be carefully studied to build an efficient and decentralized market. We look forward to working with leading teams in this field to identify the most promising approaches.

Operating Zero-Knowledge Infrastructure So far, we have studied the design considerations for building decentralized proof networks and proof markets. In this section, we evaluate which operators are best suited to participate in the proof network and share some thoughts on the supply side of zero-knowledge proof generation.

Miners and Validators

There are currently two main types of blockchain infrastructure providers: miners and validators. Miners run nodes on proof-of-work networks like Bitcoin. These miners compete to produce sufficiently rare hash values. The more powerful their computers and the more computers they have, the more likely they are to find rare hash values and receive block rewards. Early Bitcoin miners began mining on home computers using CPUs, but as the network grew and block rewards became more valuable, mining operations became specialized. Nodes were aggregated for economies of scale, and hardware setups became specialized over time. Today, miners almost exclusively use Bitcoin-specific integrated circuits (ASICs) to operate in data centers near cheap sources of energy.

The Rise of Proof of Stake and the Need for Validators

Validators play a role in proof of stake (PoS) blockchains similar to that of miners in proof of work (PoW) blockchains. They propose blocks, execute state transitions, and participate in consensus. However, they do not try to produce as many hash values (hashrate) as possible to increase the chances of creating a block, like Bitcoin miners. Instead, validators are randomly selected to propose blocks based on the value of the assets they have staked. This change eliminates the need for energy-intensive equipment and specialized hardware in PoS, allowing for more widely distributed node operators to run validators, which can even be run in the cloud.

The more subtle change introduced by PoS is that it turns blockchain infrastructure into a service business. In PoW, miners operate in the backend, largely invisible to users and investors (can you name a few Bitcoin miners?). They have only one client, the network itself. In PoS, validators (such as Lido, Rocket) provide network security by staking their held tokens, but they also have another client: the stakers. Token holders seek operators they can trust to securely and reliably represent them in running infrastructure and earn staking rewards. Because validator income is tied to the assets they can attract, they operate like a service company. Validators have been branded, employ sales teams, and build relationships with individuals and institutions that can stake tokens with them. This makes staking business fundamentally different from mining business. This key difference between the two is one reason why the largest PoW and PoS infrastructure providers are completely different companies.

ZK Infrastructure Companies

Many companies have emerged over the past year that specialize in hardware acceleration of zero-knowledge proof (ZKP). Some of these companies produce hardware to sell to operators, while others run the hardware themselves and become new infrastructure providers. The most well-known ZK hardware companies currently include Cysic, Ulvetanna, and Ingonyama. Cysic plans to build ASICs (application-specific integrated circuits) that accelerate common ZKP operations while maintaining chip flexibility for future software innovation. Ulvetanna is building an FPGA (field-programmable gate array) cluster to serve applications that require particularly strong proof capabilities. Ingonyama is researching algorithmic improvements and building a CUDA library for ZK acceleration, with plans to eventually design an ASIC.

Block unicorn note: CUDA library: CUDA (Compute Unified Device Architecture) is a parallel computing platform and application programming interface (API) developed by NVIDIA for its graphics processing units (GPUs). The CUDA library is a set of precompiled programs based on CUDA, which can execute parallel operations on NVIDIA GPUs to improve processing speed. For example, function libraries for linear algebra, Fourier transforms, and random number generation.

ASIC: ASIC is the abbreviation of Application-Specific Integrated Circuit, which is translated into “application-specific integrated circuit” in Chinese. It is a type of integrated circuit designed to meet specific application requirements. Unlike general-purpose processors (such as CPUs) that can perform various operations, ASICs have already determined their specific tasks when they are designed. Therefore, ASICs usually achieve higher performance or higher energy efficiency in their specially designed tasks.

Who will operate the ZK infrastructure? We believe that the companies that perform well in operating ZK infrastructure mainly depend on the incentives model of the verifier and the performance requirements. The market will be divided into pledge companies and new ZK-native teams. For applications that require the highest performance verifier or extremely low latency, the ZK-native team that can win the proof competition will take the lead. We expect that such extreme requirements will be individual cases rather than the norm. The other parts of the market will be dominated by pledge businesses.

Why aren’t miners suitable for operating ZK infrastructure? After all, ZK proof, especially for large circuits, has many similarities to mining. It requires a lot of energy and computing resources, and may require specialized hardware. However, we do not believe that miners will become early leaders in the field of proof.

First of all, the hardware of proof of work (PoW) is not effectively applicable to proof of work. The ASICs of Bitcoin are defined as not reusable. The GPUs (such as Nvidia Cmp Hx) commonly used for mining Ethereum before the merger are specifically designed for mining, making them perform poorly on ZK workloads. Specifically, their data bandwidth is weak, so the parallelism provided by the GPU cannot bring real benefits. Miners who hope to enter the proof business will have to accumulate hardware applicable to ZK from scratch.

In addition, mining companies are at a disadvantage in brand awareness in proof of stake-based proofs. The biggest advantage of miners is that they can obtain cheap energy, which enables them to charge lower fees or participate in the proof market more profitably, but this is unlikely to exceed the challenges they face.

Finally, miners are accustomed to static requirements. Bitcoin and Ethereum mining did not frequently or significantly alter the requirements of their hash functions, nor did they require these operators to make other modifications to protocols affecting their mining setups (excluding mergers). In contrast, ZK proofs require vigilance for changes in proof technology that can affect hardware setups and optimizations.

Stake-based proof-of-stake models are a natural choice for validator firms. Individuals and institutional investors in zero-knowledge applications will delegate their tokens to infrastructure providers for rewards. Staking businesses have existing teams, experience, and relationships that can attract large amounts of delegated tokens. Even for protocols that do not support delegated proof-of-stake (PoS), many validator firms offer whitelist validator services to run infrastructure on behalf of other parties, which is a common practice on Ethereum.

Validators cannot obtain cheap electricity like miners, which makes them unsuitable for the most energy-intensive tasks. The hardware setups required to effectively aggregate the operation of provers may be more complex than those of ordinary validators, but are likely to be suitable for validators’ current cloud or dedicated server infrastructure. However, like miners, these firms lack in-house ZK expertise and are difficult to compete in proof races. In addition to stake-based proofs, the business model for operating ZK infrastructure differs from that of staking businesses and is not characterized by strong positive feedback effects. We expect native ZK infrastructure providers to dominate non-stake-based high-performance proof tasks.

Summary

Today, most provers are run by teams building applications that require them. As more ZK networks are launched and decentralized, new operators will enter the market to meet proof demands. The identity of these operators depends on the proof selection model and the proof requirements imposed by specific protocols.

Staking infrastructure firms and native ZK infrastructure operators are most likely to dominate this new market.

Decentralized proof is an exciting new area for blockchain infrastructure. If you are a developer of applications or infrastructure providers in the ZK field, we would love to hear your opinions and suggestions.

An empty `

` tag.

Like what you're reading? Subscribe to our top stories.

We will continue to update Gambling Chain; if you have any questions or suggestions, please contact us!