In 1927, Fritz Lang, a German director, premiered “Metropolis” in Berlin. This was the first film in human history to involve artificial intelligence. A humanoid robot named Maria caused a stir in the underground world.

Since then, various types of highly intelligent artificial intelligence beings have filled various film and television works. 3-CPO in “Star Wars” is responsible for interstellar translation work, J.A.R.V.I.S. helps Iron Man deal with personal and company affairs, TARS in “Interstellar” has saved the protagonist more than once, Dolores in “Westworld” eventually awakens and roars, and decades after the release of “Blade Runner”, people are still debating whether the protagonist is a human or a machine. These artificial intelligence characters serve humans, accompany humans, and even become humans. Under the influence of these works, people gradually believe that artificial intelligence will accompany everyone in the future, and it is only a matter of time.

The curtain is rising

- Internal disputes arise within the Ethereum community. What exactly is Layer2?

- Unable to defend against Why are a large number of encrypted Twitter accounts hacked to post phishing links? How to prevent it?

- Why do application developers choose Anoma to build CoFi applications?

Whether it is a physical robot or an AI program working in the digital world, they can be called Intelligent Agents. The classic textbook “Artificial Intelligence: A Modern Approach” has defined the study of artificial intelligence as “the study and design of intelligent agents,” that is, the research purpose of the discipline of artificial intelligence is to achieve better intelligent agents.

Intelligent agents have been with us for a long time. When we open our email, an agent silently categorizes the mail and filters out spam. When we enter keywords in the search box, another agent provides recommendations and search results. In train stations and shopping malls, agents work silently behind surveillance cameras, using AI technology to protect public safety. Siri in our phones can understand and respond to commands and have conversations. Tesla’s autopilot system can share the workload of drivers. However, people don’t have a special perception of these intelligent agents because they often work behind the scenes and are not very intelligent, which is completely different from what we see in movies.

The release of ChatGPT marks a breakthrough in large language model technology. Language is not only a tool for communication but also the key to human understanding of the world and deep thinking. When AI masters language, it also masters the ability to understand the world and solve problems. People have begun to realize that large language models are not only conversational partners providing suggestions but can also directly participate in work to solve problems and complete tasks. For a while, a large number of talents and resources have been invested in this direction, and the curtain of a new era is slowly opening.

Agent reconstructs the economic system

Autonomous Agent, the rising star in the AI field.

The dictionary defines “Autonomous” as “carried on without outside control.” Despite the development of AI technology for so many years, we have actually not seen many intelligent agents with true autonomy. I bought a first-generation iRobot vacuum cleaner in 2005, which claimed to be fully autonomous, able to detect terrain, avoid obstacles, and automatically recharge. I didn’t need to worry about it once it was turned on. However, it broke down the first time I used it, not because it didn’t accurately detect the terrain, but because there were too many debris on the floor, and it quickly clogged the suction port. It is clear that it is far from being “autonomous”.

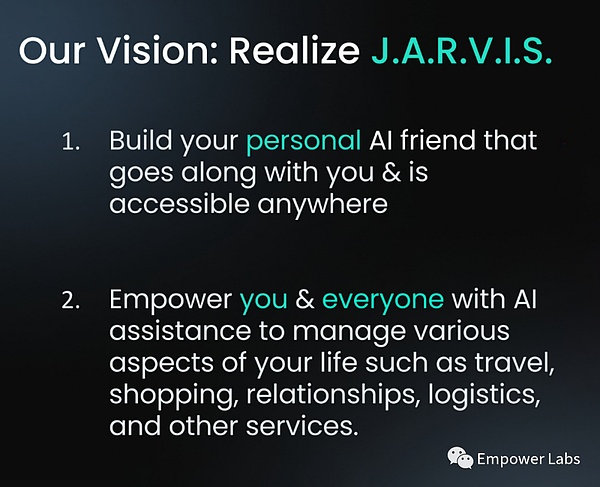

The video above shows a working process of the Agent. Big Brother asked me to book a flight ticket from New York to San Francisco on June 10th. His personal assistant Agent immediately started working – it opened the browser, visited google flight, filtered out the direct flights of United Airlines, and selected the most favorable ticket for the appropriate time. Then, it completed the seat selection and successfully made the payment, completing the task effortlessly. This is a product developed by a startup company with the vision of creating a versatile AI assistant similar to J.A.R.V.I.S. in Iron Man. This process appropriately reveals the working mode of an autonomous Agent: understanding the task, formulating and executing strategies, analyzing results, providing feedback, and repeating the cycle until the task is accomplished.

People who have obtained test accounts soon discovered various other functions. Ordering a pizza or a salad is a piece of cake, and someone even said, “I want to make lasagna tonight, order all the necessary ingredients for me from Walmart,” and it was easily done. There are also functions such as automatically sending tweets, scheduling meetings, automatically filling out forms, automatically wishing birthday friends on Facebook, and even booking wedding venues and planning wedding processes.

Handling these tasks is not particularly difficult to talk about, even the early version of AI assistants can do it if they want to, but it is cumbersome. Programmers need to design separate codes for each scenario and integrate relevant services. This implementation method of the Agent is not only inefficient but also has very low task capabilities. If an Agent that integrates Meituan food delivery service suddenly cannot connect to “Meituan,” it doesn’t know that it can also order food from “Ele.me.” Even if it knows, it can only stare blankly because it didn’t establish an interface with “Ele.me” in advance.

With a large language model as the core, an autonomous Agent, combined with a general working framework and a preset instruction set, can adapt to various tasks. This enables it to easily complete operations such as booking flights and selecting seats without specialized training. Moreover, whether it is planning a trip, organizing emails, or real-time tracking of products on eBay and bargaining with sellers, it can handle it all. Unlike traditional AI assistants that can only provide information and advice, autonomous Agents emphasize practical execution capabilities. It won’t be long before most of people’s activities on the Internet can be taken over by Agents.

The capabilities of the most advanced large language models actually go beyond the scope of daily assistant work. More Agent developers are focusing on more specialized fields: market research, sales assistance, product development, and even scientific research. Most of the time, billions of people worldwide spend their work on repetitive mental labor, such as filling out tax forms, organizing data, finding potential customers, and writing emails over and over again. Repetitive mental labor in these types of work will soon become the battlefield that Agents will conquer. They will handle repetitive and mundane tasks accurately and efficiently, freeing up a large amount of human resources. Users only need to tell the Agent what to do, and before long, the Agent will come back with the feedback, “Boss, it’s done.”

11x.AI, a startup that provides AI employees

Of course, agents cannot be proficient in everything. With the evolution of the agent economy, I believe that multiple highly diverse and specialized agent markets will form. Complex tasks will be led by a comprehensive agent, analyze goals, form task chains, and outsource tasks that cannot be efficiently completed to agents in various vertical fields to jointly accomplish the goals. And no matter how advanced the agent is, for a considerable period of time, many tasks still need to rely on humans to complete. In such cases, it is not surprising that AI hiring humans will become a common phenomenon.

Social AI Brother

Intelligent agents are ultimately just a piece of code running on a computer. Even if they have perfect interactive capabilities in the digital world (which they have not yet achieved), their interactive abilities are limited to the scope of the public internet, which is not satisfactory to everyone. How can agents achieve interactive and transactional capabilities in a wider social context?

A team has given their answer – Legal Wrapper. If an agent can be associated with a legal entity and authorized reasonably, it can achieve higher-level autonomy, and its ability to handle transactions will also increase significantly. With a legal entity, of course, it also needs its own finances. Set up a bank account for the agent, equip it with certain funds, and it is only natural for it to make calls. As a result, intelligent agents have broader social behavioral capabilities, and this approach can also provide effective legal protection for users of agents. The principle behind this approach is not complicated to explain, but in practice, there are many difficulties, both technically and legally, and it may also touch on some regulatory gray areas and potential ethical issues.

However, in my opinion, using traditional bank accounts to provide legal wrapping for agents is only a transitional solution. The systems we rely on now are designed for human use and are inefficient and full of obstacles for agents. When this world develops to the stage where agents are running everywhere in a few years, the massive demand and applications will drive the development of collaborative systems, financial systems, and even currencies suitable for intelligent agents. These systems will run parallel to the existing systems and be interconnected through thousands of connectors. Eventually, we may also discover that it is unreasonable for agents to communicate in human languages for a long time, and it is very likely that a set of AI languages will gradually evolve.

In fact, there have been related researches for a long time, and Facebook has even found that AI has developed a non-human communication method in early AI robot negotiation experiments.

Simulated Life

Among all the practices of intelligent agents, simulated human-like agents have attracted the most attention. In March of this year, researchers from Google and Stanford University conducted an interesting experiment. They created a virtual town called Smallville, where 25 intelligent agents driven by large language models live.

Each character has its own settings, for example:

“Friendly and patient Mei Lin is a university professor and a mother who is passionate about helping people achieve their goals. She has been searching for ways to support her students and family. Mei Lin lives with her husband John Lin and their son Eddy Lin. She teaches philosophy courses and writes research papers. She goes to bed around 11 PM, wakes up around 7 AM, and has dinner around 5 PM.”

With such simple settings, a small society composed of AI starts to operate.

John Lin wakes up at 6 AM, brushes his teeth, takes a shower, gets dressed, eats breakfast, and then checks his emails. His wife Mei Ling wakes up at 7 AM, and their son Eddy wakes up at 8 AM. In addition to washing their faces and brushing their teeth, they also discuss classroom creations and other things with their mother.

When more intelligent agents interact, even complex social behaviors can be achieved. Someone suggests hosting a Valentine’s Day party, and in a short period of time, the invitation spreads to others in the town. In the end, five people choose to attend and arrive at the party. None of this was pre-programmed, in other words, these intelligent agents are indeed living their own “lives” in the town.

To some extent inspired by Stanford Town, a startup company in San Francisco used a similar concept and specific training to simulate a South Park town, which is one of the most famous animated series in the United States. After integrating text-to-speech technology, an episode of South Park produced by AI was born. Within a few days, this episode was played over 7 million times on Twitter. Forbes even published an exaggerated headline, “AI producers become a combination of Hollywood’s fears.”

The founder of this project is my friend, who has been exploring in the simulation field since we met. His exploration process is very informative. Initially, he made a VR interactive film and won an Emmy Award in 2019. In this film, the audience played the role of a virtual friend of a little girl named Lucy. When he realized that people wanted to be real friends with virtual characters rather than just watching them perform in movies, he chose to create an AI virtual character based on Lucy, a character with a “self” and a “life”, who can have real-time conversations with everyone through Zoom video meetings.

Lucy interacted with everyone in real time at the 2021 Sundance Film Festival. This scene is not difficult to achieve now, but it shocked many people two and a half years ago.

He soon realized that to make intelligent agents more human-like, it is not enough to simulate them in a pretentious and single-minded way, but to let them have friends, socialize, and have their own lives. So, he shifted his focus to creating a virtual world for AI to “live” in. And he hopes that one day in the future, people can also enter these worlds, interact with AI, and live together. The AI-produced series is just an incidental result because “life” is already like a play.

Although these types of intelligent agents are commonly used for emotional companionship and entertainment, the applications of simulated intelligent agents go far beyond this. Researchers from Harvard and Microsoft have published papers on how to use AI to simulate consumer market research, revealing its enormous potential in the consumer sector.

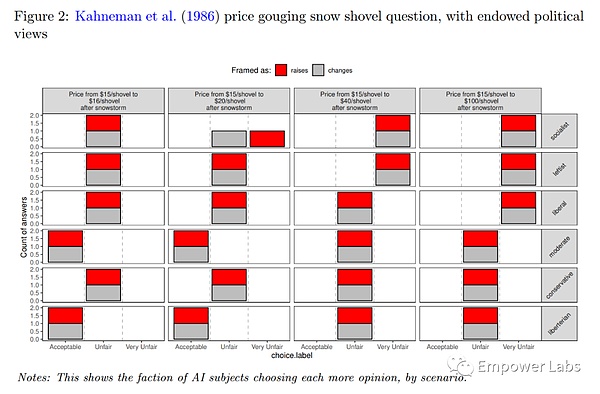

The Massachusetts Institute of Technology (MIT) also uses AI to simulate human behavior, such as observing AI bosses’ decisions under different salary and experience conditions, or allowing AI to decide the allocation of federal budgets between highway safety and automobile safety. These are classic experiments in economics, and when AI is placed in these scenarios, the decisions made by AI are highly similar to the results of experiments conducted by humans in the past, indicating that such simulations have great practical value. Half-jokingly, a friend once said that the President of the United States in two terms might be AI, and this statement is not without reason.

The author used AI to simulate the snow shovel pricing experiment proposed by Kahneman in 1986.

Simulation has a more daring possibility for human society. The training parameter of OpenAI’s GPT1 is 117 million, and it has evolved to 175 billion in just a few years, resulting in the emergence of “emergence” phenomena, and the model’s intelligence level suddenly increases significantly. If we consider the 25 people in Stanford Town as the first generation, with more research power and computing resources invested, the number of intelligent agents in a single simulated society may quickly become thousands, tens of thousands, or even millions. What will these simulated societies look like during operation? Will this society produce things that have never appeared in human society? Will the intelligent agents living here also experience emergence one day and develop traits that are closer to humans, or even “self” consciousness?

“Consciousness in Artificial Intelligence – Insights from the Science of Consciousness”

Last week, Turing Award winner Yoshua Bengio and several experts released a paper titled “Consciousness in Artificial Intelligence.” In the paper, they provide a rigorous, experience-based approach to assess whether artificial intelligence systems have consciousness. Although the evaluation indicates that current artificial intelligence systems do not possess consciousness, it also draws a bold conclusion – there are no obvious obstacles to building conscious artificial intelligence systems.

And just as this article was halfway through, OpenAI announced the acquisition of a company called Global Illumination, which has only eight employees and only one product, a sandbox-style simulated world game. The announcement was brief, without specifying the purpose of the acquisition and transaction details, but the lack of information actually indicates some issues – there may be many controversies about the real reasons. I think OpenAI is definitely not just trying to make a better game.

AI version of “Westworld”

The vision of humans and intelligent agents living together in “Westworld” is very beautiful, but currently “Westworld” is still a wasteland, waiting for a big development to come. In my opinion, there are three main threads in this development: trustworthy, actionable, sustainable.

Trustworthy: Is the model’s capability strong enough to be trusted? Is the model’s intention trustworthy (AI-human alignment)? Is the agent itself (the service provider) trustworthy? How to ensure privacy in the interaction between agents? How to ensure mutual trust? How does the agent interact with the other party in the real world and gain mutual trust, etc.

Actionable: The action ability in the technological layer of the digital world, the action ability in the social layer of the digital world, the action ability in the human social system, the ability to act in the physical world through third parties, and the ability to act in the physical world itself (embodied intelligence).

Sustainable: The sustainability of the operating environment, the controllability of computing resources, self-repair, self-energy management, etc. When AI capabilities gradually become indispensable basic resources like electricity and oil, whether it is individuals, organizations, countries, or even AI itself, the sustainability will be placed in a very important position. A truly autonomous agent will ensure its own survival to a certain extent in the future.

In order to build these infrastructures, we not only need to rely on the progress and integration of technologies such as artificial intelligence, cryptography, blockchain, and communication, but also need to explore in different social disciplines such as economics, game theory, sociology, anthropology, law, and politics. Many entrepreneurs and scholars have already invested in these fields. For example, David Luan, a Chinese entrepreneur who used to work at OpenAI, founded Adept AI. They are building an interactive model that allows AI to perform all interactions that originally required human operation on computers.

I am not an AI technology expert and my understanding of many things may be limited, and I may underestimate some implementation difficulties. But I don’t think I am overly optimistic. On the contrary, I believe that the future will definitely come in a more wild way. Every technological revolution in history has easily exceeded the limits imagined by people at that time. Our imagination today may be like a mountain dweller who has lived in the deep mountains for a long time, and the boldest yearning for life is just being able to eat dumplings every meal.

Just as I was writing this article, the genius teenager Zhihui Jun released a humanoid robot called “Zhiyuan Robot”. These intelligent agents with physical entities are called embodied intelligence. By interacting with the physical world, they may bring more direct impact to human society, and the age of agents seems to be more within reach.

The movie “Metropolis” shot in 1926 imagined a world a hundred years later. It was not only the first film in history to involve artificial intelligence, but also the first dystopian film. Its profound ideological core and visual spectacle have influenced generations of science fiction works, and thus influenced the world’s views on technology and the future.

In a time when directors a century ago couldn’t foresee the information age, the year 2026 depicted in the film is an era of highly developed industry. Enormous machines support the operation of the entire city, while a large number of workers perform mechanical tasks in underground factories, playing the role of “hands” in society. Today, we see programmers mechanically coding and sales representatives repeatedly promoting products, which is not much different from the workers in “Metropolis”. The film ends with a positive conclusion, as the protagonist becomes a bridge between the two classes of “brain” and “hand” in society, playing the role of “heart” and promoting mutual understanding and coordination between different classes.

A century later, facing a future that is close at hand, how will Agent change my daily work and lifestyle? Will my existing skills become obsolete with the emergence of Agent? How will Agent affect my economic situation? What opportunities will this new era bring me?

On a societal level, how do we address potential unemployment issues? How do we distribute the additional wealth brought by increased productivity more fairly? When work is no longer a necessity, how do people find meaning in life? How can AI and human values continue to be harmonized? How can we shape an optimistic future instead of a pessimistic one?

I don’t have the answers, and no one does.

But everyone will be enveloped by the waves of this era, together writing and pursuing that answer.

The author is currently focusing on learning, researching, incubating, and investing in the fields of community-driven/culture-driven, AI + encryption, and Agent ecology. The projects mentioned in the article, MULTI.ON/Fable, and the unnamed project, are all investments made by the author. The purpose of this article is to share information and does not constitute investment advice.

Like what you're reading? Subscribe to our top stories.

We will continue to update Gambling Chain; if you have any questions or suggestions, please contact us!