Every computation stack needs storage, otherwise it’s all talk. With computing resources continuing to increase, there is a lot of underutilized storage space. Distributed Storage Networks (DSNs) can coordinate and leverage these potential resources, turning them into productive assets. These networks have the potential to introduce the first true commercial vertical to the Web 3 ecosystem.

P2P Development History

The emergence of Napster marked the entry of strictly defined P2P file sharing into the mainstream. There were other file sharing methods before, but Napster’s MP3 file sharing drove the popularity of P2P. Since then, distributed systems have developed rapidly. Due to the centralization of the Napster model (used for indexing), it was easy to shut down due to legal regulations, but it laid the foundation for more powerful file sharing methods.

The Gnutella protocol follows this approach and has many different front ends that use the network in their own way. As a more distributed Napster-style query network, Gnutella is better able to cope with censorship, as verified at the time. AOL acquired the rising Nullsoft and recognized its energy, promptly canceling its release. However, the product had already leaked out and was quickly reverse-engineered, bringing well-known front-end applications like Bearshare, Limewire, and Frostwire to people. The ultimate cause of the failure of such applications was the bandwidth requirement (which was a scarce resource at the time) and a lack of vitality and content guarantees.

Remember that? It has been reborn as an NFT market (https://limewire.com/product)…

BitTorrent, which appeared later, was an upgrade thanks to the bidirectional nature of its protocol and its ability to maintain a Distributed Hash Table (DHT). The importance of DHT is that it is like a distributed ledger, storing the location of files for other participating nodes in the network to search for.

After the birth of Bitcoin and blockchain, people began to envision whether this new type of coordination mechanism could connect potential unused resources and commodity networks, and distributed storage networks (DSNs) began to sprout.

In fact, many people are not aware that the starting point for tokens and P2P networks was not Bitcoin and blockchain. The original creators of P2P networks recognized the following:

-

Building useful protocols and then monetizing them is very difficult due to forks. Even if monetization is achieved through methods such as front-end advertising, it will be suppressed by forks.

-

There is a large difference in usage. Using Gnutella as an example, 70% of users do not share files, and 50% of requests are concentrated on files hosted by the top 1% of hosts.

Power Law

How to address these issues? BitTorrent started from the share ratio (download/upload ratio), and other protocols introduced an original token system. They usually call tokens credit or points, and distribute tokens to incentivize good behavior (promote the healthy development of the protocol) and maintain the network (such as managing content through credibility ratings). For the history of this, I strongly recommend reading John Backus’s article (now deleted, but can be read through the Internet Archive):

-

Fat Protocols Aren’t New (https://qhexub4or3ek2jwgl7cokovs4yyb7pefuxpbum4v2ceptfzsis5q.arweave.net/gcl6B46OyK0mxl_E5Tqy5jAfvIWl3hozldCI-ZcyRLs)

-

What If BitTorrent had a Token? (https://hwfgxuwbbbn5w2zay3tjplvhyuv2dhfep7wc7vnj4ledn6vy3e7q.arweave.net/PYpr0sEIW9trIMbml66nxSuhnKR_7C_VqeLINvq42T8)

It is worth mentioning that the original vision of Ethereum included a distributed storage network (DSN), which it called the “trinity,” aiming to provide necessary tools for the prosperous development of the world computer. It is rumored that Gavin Wood proposed the concept of Swarm as the storage layer and Whisper as the message delivery layer.

In short, mainstream distributed storage networks were born. Everyone knows what happened next.

Distributed Storage Network Landscape

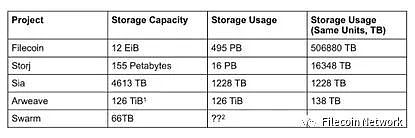

The market pattern of distributed storage networks is very interesting, with a gap between the scale of the top (Filecoin) and emerging storage networks. In the general public’s impression, the storage field is dominated by two giants, Filecoin and Arweave, but according to usage, Arweave ranks fourth, after Storj and Sia (although Sia’s usage seems to be declining). Although we may doubt the authenticity of the data on Filecoin, even if discounted by 90%, Filecoin’s usage is still about 400 times that of Arweave.

What can be inferred from this? First of all, there are dominant companies in the current market, but whether this dominance can continue depends on whether storage resources are useful.

These distributed storage networks (DSNs) generally use the same architecture, where node operators have a large amount of unused storage assets (hard drives) that they can stake to mine blocks and earn mining rewards by storing data. The methods for pricing and implementing permanent storage can vary, but the most important differentiation is making it easy and cost-effective for users to retrieve and process stored data.

Comparison of Storage Network Capacity and Usage

Note:

1. Arweave’s capacity cannot be directly measured, but its mechanism encourages node operators to ensure sufficient buffering to increase supply to meet demand. So how big is this buffer? We cannot determine it because it cannot be measured.

2. The actual network usage of Swarm cannot be determined, but the amount of storage space paid for can be seen. It is unknown whether it is being used.

The projects listed in the table are all currently in operation, and there are also some planned distributed storage networks (DSNs), such as ETH Storage and MaidSafe.

FVM

Before discussing FVM, it is necessary to mention FEVM (Filecoin Ethereum Virtual Machine), which was recently launched by Filecoin. FEVM is a hypervisor-based WASM virtual machine that supports many other runtimes. For example, FEVM is based on the Ethereum Virtual Machine runtime for the FVM/FIL network. FEVM is worth emphasizing because it promotes the explosion of smart contract-related activities on FIL. Before FEVM was launched in March, there were only 11 active smart contracts on FIL. After FVM was launched, the number of smart contracts increased dramatically. The benefits of composability are highlighted, and work done in Solidity can be used to build new businesses on top of FIL, making various innovations possible, such as the quasi-liquid staking primitives developed by the GLIF team and innovations in market finance. We believe that FVM will accelerate the growth of storage providers because capital efficiency has improved (storage providers need FIL to actively provide storage/seal storage transactions). Unlike traditional LSD, the credit risks of individual storage providers need to be evaluated.

Permanent Storage

I believe Arweave is the most vocal about this, as its slogan directly addresses the deepest desire of Web 3 participants: permanent storage.

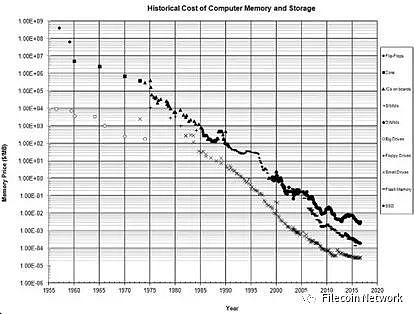

But what does permanent storage mean? It is undoubtedly an attractive feature, but in reality, execution is everything. The ability to execute depends on sustainability and end-user costs. Arweave adopts a one-time payment permanent storage model (200-year advance payment + assumed storage value decay). This pricing model is suitable for target assets in a deflationary pricing environment, relying on continuous reputation appreciation (i.e. old transactions subsidize new transactions), but it is the opposite in an inflationary environment. Historically, this pricing model has no problem because the cost of computer storage has remained downward trend since its appearance, but considering only the cost of hard disk is not comprehensive.

Arweave creates permanent storage through the incentive mechanism of the Succinct Proof of Random Access (SPoRA) algorithm, which encourages miners to store all data and prove that they can randomly generate historical blocks. Doing so can increase the probability that miners are selected to create the next block (and receive corresponding rewards).

Although this mechanism may make node operators want to store all data, it does not guarantee that they will do so. Even if high redundancy is set and conservative probing is used to determine model parameters, potential loss risks can never be ruled out.

The only way to achieve permanent storage is to clearly force someone (or everyone) to execute, and if the execution is unfavorable, they will be eliminated. How to motivate people to take on this responsibility? There is no problem with the probing method itself, but the best way to implement and price permanent storage still needs to be explored.

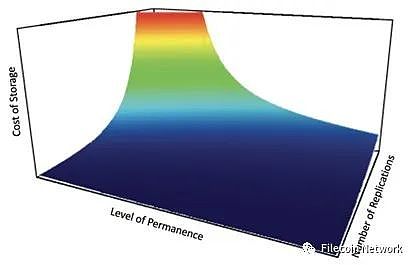

After all the preparation, we finally need to ask, what level of security can people accept for permanent storage, and then consider pricing within a given time frame. In reality, consumer preferences will always fall within the replication spectrum (permanence), and they should be able to choose a level of security and receive corresponding pricing.

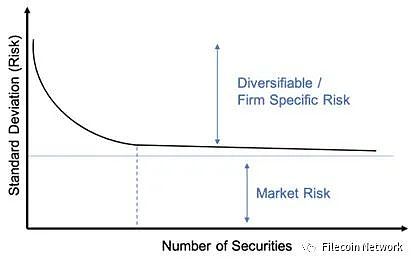

Traditional investment literature and research fully demonstrate the benefits of diversification in reducing overall portfolio risk. Initial diversified investments can reduce portfolio risk, but over time, adding another stock has almost no benefit.

I believe that in a distributed storage network, if the replication amount is not proportional to the storage cost and security, the storage pricing beyond the default replication should be similar to the curve in the figure below:

For future development, I am most looking forward to what opportunities DSN with easily accessible smart contracts can bring to the permanent storage market. I think consumers will benefit if the market opens up different options for permanent storage.

We can consider the green area in the above figure as an experimental field. Perhaps it is possible to achieve an exponential decrease in storage cost without significantly changing the replication amount and permanent level.

Permanent storage can also be achieved by replicating between different storage networks, not just within a single network. This path requires more ambition, but it will naturally produce permanent storage at different levels. The biggest issue here is whether we can make permanent storage spread throughout the distributed storage network and make it a free lunch, just as diversifying market risks with stock portfolios.

The possibility does exist, but it needs to consider overlapping node providers and other complex factors. Insurance can also be considered, such as allowing node operators to bear higher-level penalty conditions in exchange for guarantees. Such a system is not easy to maintain because it involves multiple code libraries that need to be coordinated. Nevertheless, we look forward to the promotion of such designs to promote the development of the permanent storage concept in the entire industry.

The first commercial market in Web3

Matti recently tweeted (https://twitter.com/mattigags/status/1627607780692484098?s=20) that storage is a practical business value application case for Web3. I think this is possible.

Recently, I talked to a Layer 1 blockchain team and told them that as L1 managers, they have an obligation to fill the block space, but more importantly, to achieve this through economic activity. This industry often overlooks the second part of its name, the currency part.

Any protocol that issues tokens needs to support some form of economic activity if it wants to avoid being shorted. For L1 protocols, their native tokens are used to process payments (execute computations) and charge corresponding Gas fees. The more economic activity, the more Gas, and the greater the demand for tokens. This is the cryptographic economic model, and other protocols may choose to provide SaaS as an intermediary layer.

When the encryption economic model is combined with a specific commodity, the effect is particularly significant. For Layer 1 protocols, this commodity is computation. However, when it comes to financial transactions, price fluctuations in execution are a huge blow to user experience. In financial transactions such as swaps, execution fees should be the least important part.

Given the poor user experience, it is very difficult to fill block space with economic activity. Although scaling solutions are constantly emerging to help solve this problem (the “Interplanetary Consensus White Paper” (https://raw.githubusercontent.com/consensus-shipyard/IPC-design-reference-spec/main/main.pdf) is highly recommended for reading in PDF format), the Layer 1 market is flooded, and it is not easy for any protocol to obtain enough economic activity.

However, when computational power is combined with some kind of additional commodity, the problem becomes a little simpler. As far as distributed storage networks are concerned, the commodity is obviously storage space. Data storage and its derivatives in finance and securitization can immediately fill the gap in economic activity.

But distributed storage also needs to provide effective solutions for traditional enterprises, especially those that need to comply with data storage regulations. This requires consideration of audit standards, geographical restrictions, and optimized user experience.

In the second part of the Middleware Paper (https://zeeprime.capital/web-3-middleware), we discussed Banyan (https://banyan.computer/), whose products have actually taken the right track in this regard. They cooperate with DSN node operators to obtain SOC certification for the storage they provide, and provide a simple user interface to optimize file uploads.

But that’s not enough.

The content of the storage also needs to be easily accessible through an efficient retrieval market. Zee Prime sees the prospect of building a content distribution network (CDN) on DSN. Basically, CDN is a tool that caches content near users and reduces latency when retrieving content.

We believe that this is the next key to the popularization of DSN, as it can achieve fast loading of videos (like distributed Netflix, YouTube, and TikTok). Glitter in our investment portfolio is a representative in this field, focusing on DSN indexing. It belongs to critical infrastructure and can improve retrieval market efficiency and bring richer use cases.

These types of products have shown a high degree of product-market fit and there is a great demand for them in Web 2. Nevertheless, many products still face some friction, and the permissionless nature of Web 3 may be their gospel.

The Meaning of Composability

We believe that an excellent opportunity in the DSN field is right in front of us. In these two articles by Jnthnvctr.eth (https://twitter.com/jnthnvctr), he discusses how the market is developing and some upcoming products (using Filecoin as an example):

-

State and Direction of Filecoin (https://blog.filecointldr.io/state-and-direction-of-filecoin-summarized-4b90c59e3cca)

-

Business Models on the FVM (https://blog.filecointldr.io/business-models-on-the-fvm-23cd71fdd3f1)

The most interesting point is the potential to combine off-chain computation with storage and on-chain computation. This is because providing storage resources itself requires computational power. This natural combination can increase business activity in the DSN and open up new use cases.

The launch of the FEVM makes many new experiments possible and brings fun and competition to the storage field. Entrepreneurs who want to create new products can check out all the products that Protocol Labs wants people to build on the resource library (https://rfs.fvm.dev/), and building them may lead to rewards.

Web 2 revealed the gravity of data, and companies that collect/create a lot of data can benefit by closing the data to protect their interests.

If our ideal user-controlled data solution becomes mainstream, the scenario of value accumulation will change. Users become the main beneficiaries, and monetization tools that unlock this potential by exchanging data for cash flow can benefit, and the storage and access methods of data have also undergone huge changes. This type of data can naturally be stored on a DSN, and the DSN can use the powerful query market to profit from the data. This is a shift from exploitation to liquidity.

There may be even more magical developments waiting for us.

When envisioning the future of distributed storage, consider how it interacts with future operating systems like Urbit. Urbit is a personal server built with open-source software that allows users to participate in P2P networks. It is a true distributed operating system that can self-host and interact with the internet in a P2P manner.

If the future is as Urbit’s followers hope, distributed storage solutions will undoubtedly become a key component of personal technology stacks. Users can store all personal data encrypted on a DSN and coordinate actions through the Urbit operating system. In addition, we can expect further integration of distributed storage with Web 3 and Urbit, especially projects like Uqbar Network (https://uqbar-network.gitbook.io/uqbar/), which can bring smart contracts into the Nook environment.

This is the power of composability, slow accumulation eventually leading to delightful results. From small play to revolution, it points to a way of being in a hyper-connected world. While Urbit may not be the ultimate answer to these issues (and there are criticisms of it), it shows us how these attempts can converge into a river of future.

Like what you're reading? Subscribe to our top stories.

We will continue to update Gambling Chain; if you have any questions or suggestions, please contact us!